|

Software Development Magazine - Project Management, Programming, Software Testing |

|

Scrum Expert - Articles, tools, videos, news and other resources on Agile, Scrum and Kanban |

Automated Acceptance Tests and Requirements Traceability

Tomo Popovic

The article illustrates an approach to automated acceptance testing in developing software with Java. Acceptance tests directly tie into software requirements specification and the key for achieving maintainable tests is proper handling of traceability between the requirements and implementation as well as between the requirements and acceptance tests. Automating the acceptance testing implies continuous validation of the software product and therefore continual verification of the traceability. Proper use of the development and testing tools benefits the process making sure that the requirements, acceptance tests, and software product are in sync. Ultimately, the article states that writing automated acceptance tests is traceability. Illustration of one approach to automated acceptance testing and its beneficial effects to maintainability of the requirements specification is given with respect to Java programming and use of Concordion open source test suite [1,2].

The last decade or two were definitively been interesting times for software development methodologies. Agile development, test driven development, extreme programming, along with variety of tools changed the philosophy and are still changing the way how software development is done. It is definitively becoming clear (if it is not already) that both developing and owning a software product is affected by constant need for change. During a course of software development project it is not uncommon that requirements specification changes even 30% or more [3]. It is a fact that there is a need to be able to continuously maintain and grow a software product as it was a plant such as fruit tree [4]. Dealing with existing software products, some of which have been around for more than a dozen years author learned a lesson of being hit with unexpected and unplanned changes as well as the fact that a software product needs continuous maintenance and growth.

Figure 1. Traceability is typically given in form of matrices

One of the biggest challenges in the process of software requirements management is to handle traceability. Typically, traceability is given in matrix form (Fig. 1). The main purpose is to establish bi-directional trace between requirements and components implementation as well as between requirements and acceptance tests. Manual maintaining of the traceability matrices can be real nightmare and time consuming. Modern requirements management tools provide features for this purpose, but it can still be cumbersome and expensive.

An example traceability matrix is given in Fig. 2. It is a requirements vs. test cases traceability matrix. For each requirement there should be one or more acceptance tests defined. For example, use case requirement labeled UC 1.2 can be traced to test cases 1.1.2 and 1.2.1 (highlighted in the table).

Figure 2. Traceability matrix example: Requirements vs. Tests

The table in the figure is an artificial example. However, tables like these are created and maintained manually (or semi-manually), which can be a quest. There are requirements management tools that help handling of traceability, but it is still far from easy. I will show you how the tables like this may not be needed if the right tools and automated tests are employed. We will see later that traceability becomes incorporated in the "live" requirements containing acceptance tests criteria, as well as inside the test implementation code.

The main premise for this article is that writing and utilizing automated acceptance tests is traceability. It has already been established that writing automated tests is programming and that is something I fully agree with [5]. However, dealing with acceptance tests is more than just programming since these tests should be specified by customers or business logic writers, not necessarily programmers. The challenge is to combine efforts of business logic writers and developers in painless and seamless way. The good thing, if we succeed in that, we are rewarded with "live" traceability and requirements specification that does not get old. The requirements, acceptance tests, and implementation code stay in sync, which is necessary if we plan on keeping the product adequate and use it for some time. This also has positive effects on maintainability of our code, acceptance tests, and requirements specifications.

We will look at requirements vs. acceptance tests and requirements vs. component implementation traceability and how implementation of automated acceptance tests results in inherited "live" traceability. The discussion covers tools and approach to automated acceptance tests in Java development environment. The idea is to provide unbreakable traceability between requirements and component implementation as well as between requirements and acceptance tests, while trying to overcome the challenge of maintainability and costs associated with keeping the documentation updated.

Background: Test Automation

As developers, we can try to argue need for automating acceptance tests, sometimes even support the argument stating that our process includes extensive use of unit tests. Experience and references teach us that testing and test automation should go the whole 200%: 100% for unit and integration tests, and another 100% for acceptance tests. The first set of tests is to make sure that we write the code right, while the other set of tests are there to make sure we are writing the right code [6]. There is nothing wrong with redundancy here as we want to make sure that we cover our code with tests as much possible. The major benefit coming out test automation is that it provides insurances while running our tests as regression tests after changes are made.

Unit tests are typically written by programmers and the main motivation is to verify the correctness of the code. In test driven development (TDD) we apply methodology that for each function we first write a test that fails, and then we code and refactor our product implementation until it passes the test and we are happy with the result ("red/green/refactor - the TDD mantra") [7]. Unit tests are definitively needed and developers are advised to write them. More coverage of the code with unit tests the better. Writing unit tests requires self discipline and pragmatic approach of software developers.

Integration tests are important because they cover testing of the software product with inclusion of the code that is not available for change. We want to make sure that our code when integrated into complete solution still behaves and works as expected [4].

Acceptance tests are little bit different story. As the name suggests their primary purpose is verifying and accepting the behavior of the final product, namely developed software. They provide end to end test of the system as a whole. They are easy to understand to business logic writers and product owners. The ultimate goal is that the software under development conforms to the criteria defined by a given set of acceptance tests, which are directly derived from the requirements specification. Successful run of acceptance tests is an indication both to developers and to product owners (or business logic writers) that the software product satisfies the requirements specification.

Automated Acceptance Tests go even further: making acceptance tests automated is critical as they need to be run every time a change is made. Doing it manually would have infinite cost and prevent us from successfully maintaining and growing the product. Automated approach for unit and acceptance tests provides regression testing aspect and gives power to verify stability of our software, which results in freedom to make changes and refactor [8,9]. Writing acceptance tests is not a discipline that belongs to developers only. Product owners, business logic writers, software architects are typically the people writing the specification requirements and it helps if the process assume writing the requirements in native (say plain English) language. Developers and quality assurance staff are responsible for making the tests "live". By automating the process of running acceptance tests we continuously perform "health check" of our software product, which gives us the freedom and security when we need to make changes or improvements. It is therefore important that tools used for automating acceptance tests provide easy access and editing of software requirements and test specifications.

Automating Acceptance Tests and Tools Selection for Java Development

Where it comes to automated acceptance testing for Java development there are two tools that stood out: Concordion and Fitnesse [2,10]. They are open source and one quality that is common for both Concordion and Fitnesse is how easy and quickly can they be put to work. Concept-wise they are similar and both are great products. More emphasis in this article will be on Concordion as it nicely fits into the approach and easily integrates with integrated development environments such as Netbeans and Eclipse [11,12]. I find it extremely easy to use and include clients in the process. The fact that requirements and corresponding acceptance tests are part of project file set makes it possible to keep the source code, requirements specifications, acceptance tests and fixture code in same project folder and under same version control. The tools setup seems to be working well for small and medium size projects and the approach can be extrapolated to larger projects. Please note that my focus here is on the method without any intention to start a debate on which tool is better. It is not a tool itself as much as how comfortable the development team is both with the use of the tool and being ready to include other participants and stakeholders into the process.

Figure 3. Acceptance tests automation tools provide "live" traceability

The tools selection and setup used to implement the concept applied to Java development is illustrated in Fig. 3. The following set of tools was successfully used for the approach:

- Development platform (Java), which provides for Java compiler and run-time environment.

- Version control (Subversion), which provides repository and keeps track of all work and all changes in project files. It is an ultimate "undo" command for any software development team. It also allows multiple developers to access and modify source code and keeps track of all the changes, revisions, and versions.

- Integrated development environment capable of running test (Netbeans), which provides code editor, project files management, check-in and check-out into version control, runs compiler, debugger, and tests.

- Continuous integration (Hudson), which performs automated project build. Hudson "simulates" a team member that periodically checks out the latest version of the project code, performs automated build and runs tests. The build results are presented in nice customizable web-based interface. Continuous integration is a must for a development team as it quickly raises a red flag identifying when something goes wrong.

- Unit test framework (JUnit included with Netbeans), which provides tools to create unit tests.

- Automated acceptance test platform (Concordion), provides framework for writing and executing automated acceptance tests.

As depicted, requirements specifications, fixture code (acceptance tests), and the product implementation (that should include unit tests) are all stored into the version control repository, which is in this illustration Subversion [13]. All of the files are available through version control server access and team members can access those using different tools. Continuous integration (Hudson) periodically checks out the latest code, and performs project build [14]. Any failure to build project or pass all tests will be indicated (artistically represented with traffic light here). Developers and test writers can access files using development IDE (Netbeans, Eclipse) and work on the requirements, tests, and product. Business logic writers and product owners (client, customer) can access requirements specification either through development IDE, or just by using text or HTML editors.

The tools displayed are just one combination and we are blessed with variety and high quality tools coming from open source world. Of course, one can definitively select different tools for each of the purposes or even combine open source and commercial tools where found needed. In addition to shown, there may be a need for GUI, www, or other interfacing test tools to provide for broader solution tests and make sure our acceptance tests are end-to-end as much as possible [15-17].

Example: Project Structure and Implementation of Tests

To illustrate the organization of files better let us look into an example project using development IDE, which is in this case Netbeans (Fig. 4). There is no installation of the Concordion: all that is needed is to download the latest version and include the library files with your Java project [2]. The use of Concordion assumes that the requirements are written in native speaking language (i.e. "plain English") and kept in a set of HTML files. The actual requirements might be originally written in word processor or text editor, but ultimately for use with Concordion they need to be converted to HTML. The use of HTML language may be little bit cumbersome especially to product owners or business logic personnel, but the learning curve is extremely short and steep and only basic HTML skills are needed. Furthermore, it is useful to organize the requirements and corresponding HTML files into folders so that there is a root or home folder for the specifications and then each of the specific behavior and relevant details are organized into subfolders (Fig. 4).

It is important to note that, in this example, the requirements HTML files are stored into "spec" package and each set of the files relevant to a specific behavior has its own subfolder. There are two subfolders in the example: "config" and "login", but we can easily envision having dozens or hundreds of these. Some of the requirements subfolders can even be further broken down if needed, but it is not recommended to create several levels (I try to keep it up to three at most). In this particular case the files are part of the Netbeans project, but they could be written and organized the same way by product owners or business logic writers. Organizing the requirements HTML files into folder structure like this is utilized by Concordion in a way that it creates breadcrumbs navigation at the top of the output HTML documents.

Figure 4. Concordion with Netbeans: project structure and files organization

Let us have more detailed look into some of the files: the Login class is given as an example of Java code that belongs to our system under design (Fig 5). The corresponding requirements are given in a form of HTML file. Each HTML specification should be accompanied with a Java fixture code. Term "fixture code" is used for acceptance test code we write to connect requirements and test input data with our system under design that is being tested. In order for Concordion to work, the fixture code should be stored inside a class file with same name as corresponding HTML file with "Test" suffix. For example, "LoginTest.java" pairs with "Login.html" (Fig 6). In this particular example Login specifies a more complicated behavior broken down into a set of simple behaviors. It can be seen that the Login specification contains references to other HTML specifications and indirectly runs tests tied to them. LoginTest class is empty as it only runs the links stored in corresponding Login HTML file. You can think of it as a suite of tests (1.1 Login) where each test is referenced by its HTML link and corresponds to simple behaviors: 1.1.1 System Login, 1.1.2 Sign Up, 1.1.3 Password Validation, 1.1.4 Forgot Password, etc.

The connection between requirements specifications given in HTML and fixture code is established through Concordion HTML tags ("concordion:") inserted into HTML file. Each HTML requirements specification document should have the "concordion" namespace defined at the top of the file (xmlns:concordion value) and "concordion:" tags used to insert Concordion instrumentation hidden inside HTML code. This instrumentation directs Concordion execution. Some example Concordion commands are: run, set, execute, assertEqual, etc [2]. Concordion tags establish clear connection with corresponding fixture code, which extends test class from Concordion library and is in fact also a JUnit test case.

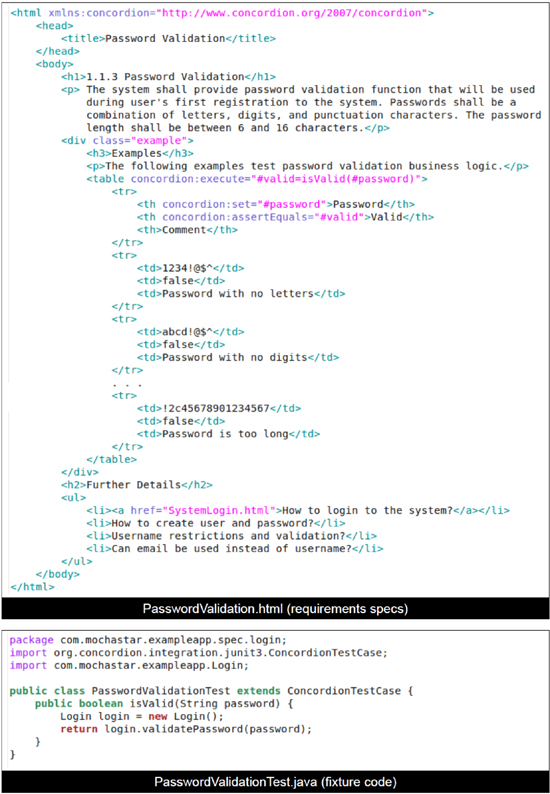

To illustrate how HTML files with requirements are tied into the code of our system under design please look at the password validation example in Fig. 7. The requirements are entered in plain English and illustrated with example data that can be used for acceptance testing. In this particular case, the example data is organized within an HTML table, but it could also be in a free format HTML text. The fixture code corresponding to password validation requirements given in the file "PasswordValidationTest.java" (please note the Test suffix). The fixture code contains isValid method that instantiates an object of Login class (belongs to our system under design) and returns true or false if password passes the validation.

Figure 5. Example code that is being tested

Figure 6. Example test suite: referencing multiple acceptance tests

Figure 7. Password validation example specification and fixture code

The password validation example is taken from excellent references discussing the issue of maintainability of acceptance tests [5,18], but here shown with respect to Java and Concordion. The approach in this article actually conforms to the ideas regarding maintainability of the acceptance tests and in a way extends to requirements specifications.

When programming tests and writing test fixture code we use Concordion as library and simply follow the template. Netbeans (or Eclipse) sees Concordion fixture code as JUnit tests. The whole concept enables easy running (same as JUnit) and easy integration with automated build tools. I strongly encourage you to check tutorials on the Concordion website for more details and examples [2]. Referring to Figures 4-7, it is important to note that Concordion library, requirements written as HTML files, acceptance test written using Concordion library, are all now part of the Java project set of files (in this case handled by Netbeans). This is very important as it is now easy to keep all of the project files together in the version control repository and make them available for all development team members.

Please note that test output files (HTML) are by default stored in a temporary folder, but the target folder can be specified. This is important as the test output can also be created by continuous integration tool (i.e. Hudson) and we could have those output HTML files automatically generated and available on internal website we use to monitor project health. Fig. 8 shows the test output files for the given example as seen in a web browser. The "Spec.html" file is our top level HTML file, which references "Login.html". The "Login.html" output is reporting error (red) for Sign Up functionality because "SignUp.html" and corresponding "SignUpTest.java" are not created yet, but Concordion run command was inserted into the HTML file. System Login and Password Validation are shown in green as those tests ran successfully. Forgot Password functionality is not highlighted as there was no Concordion commands inserted there yet so it behaves as plain HTML text. Finally, the password validation test output is given to illustrate a successful run of a specific behavior. The first section explains the "Why" part of the requirements specification. Then the example section demonstrates the behavior with the data that is used for running acceptance tests. At the end, HTML allows for easy linking between requirements, which is used here to refer to further details.

To summarize, the requirements need to be written clearly and stored into HTML files. Key here is to understand that the acceptance tests relevant to each requirement will be referenced inside its specification. For example, if we have a requirement called "1.1.1 System Login", we need to define acceptance test criteria within its specification. The acceptance tests data is best specified through example sections ("Given-When-Then") and the data is tied into fixture code using Concordion tags. The fixture code further interacts with the system being tested. Complex requirements need to be broken down into simple ones and the idea is to have at least one acceptance test per these simple behavior requirements [2]. The process of writing requirements and test specification is further simplified by use of HTML templates for suite of tests and tests descriptions. For each test it is important to provide examples that can directly be used as input and output data of the acceptance tests.

From requirements writer point of view, we could take a freedom here and spot a need for a nice requirements editor tool that could be supplied to clients and guide them in writing specifications that will result in HTML following templates for specifying requirements/test suite or actual requirements/tests. Such a tool would nicely fit into the setup picture as a missing puzzle piece, possibly even as Netbeans or Eclipse plug-in. For members of development teams, an open source integrated IDE (Netbeans, Eclipse) is a good choice since it typically provides a good HTML editor and easy check out and check in into the version control repository.

Figure 8. Login and password validation example test output

"Live" Requirements and Traceability: The Foundation for Change Management

We have seen how the presented approach provides inherited traceability: requirements vs. acceptance tests and requirements vs. implementation. Requirements and acceptance tests specification and implementation became one, which in turn provides a good foundation for change management.

Traceability: requirements vs. acceptance tests. Breaking down the complex requirements into set of simple ones illustrated with example above is the key to properly define acceptance tests. In addition, by doing so, we create requirements specification in a form that is easy to follow and maintain. The end result is that the requirements specifications and acceptance tests specifications become one and the traceability between the two is included in correctness of the specifications and provided examples. Proper utilization of templates, in this case HTML files, makes the whole process easier and streamlined. This is true for both test suites (general descriptions) and individual tests such as simple behavior description with detailed examples that can be used for coding acceptance tests.

Traceability: requirements vs. implementation. The fixture code provides traceability between the requirements/tests and the code of the system under design. The connection between the specification (requirements/tests) and fixture code is achieved by using Concordion HTML tags. It is critical to note that both requirements vs. tests and requirements vs. implementation traceability are now becoming "live" and will have to be maintained and kept up to date in order for a software product to pass acceptance tests continuously. This is critical not only when developing a new product, but also when growing and maintaining an existing one.

Change Management. The proper use of tools such as Concordion or Fitnesse let us establish maintainable structure with "live" requirements and test specifications, traceability embedded into fixture code, and actual implementation of the product. Every time we change requirements, it will need to be reflected in the fixture code for automated tests, which will, in turn, result in need to implement the change into the system under design or software product. It works in the opposite direction: any change in the code that affect the outcome and passing the acceptance tests will be highlighted and pointed to in the very next run of tests (Fig. 9). Continuous integration tool periodically goes into the version control repository and makes a fresh build of the project. It should pick up on anything going wrong, even in cases when we have several team members checking in their work. You can think of continuous integration as an additional team member that continuously monitor your project code and makes sure the project is compiling with no errors and automated tests run successfully. Acceptance test automation in combination with unit tests and continuous integration provides an excellent foundation for regression testing: fear of change, no more!

The described selection and setup of the tools work well for both for starting a new project as well as for maintaining and growing an existing project. For example, we can start with a "walking skeleton" based on software specification containing use case briefs that do not provide lots of details and information needed for full blown set of acceptance tests [4]. As the requirements iteratively grow from briefs into fully dressed use cases containing all the steps, and alternative paths, the set of acceptance tests will grow and provide better coverage [19, 20]. The approach will enable all the members of the development team to instantly become aware of new requirements details and how those details affect the system being developed.

Figure 9. Any change affecting passing acceptance tests will be easily caught

Conclusions

Acceptance tests have the key role in software development process. Implementing automated acceptance tests using tools such as Concordion or Fitnesse brings the development process to completely different level and provides several benefits for developers, clients, business logic writers, and quality assurance personnel. Clean and straightforward approach is needed to keep the requirements free of "clutter" and nicely coupled with the implementation using fixture code. The use of automated acceptance test tools ultimately ties acceptance tests into the requirements specification, which results in better maintainability, keeps requirements specification in sync with the system under development, and provides inherited traceability between the requirements and acceptance tests.

The article described approach to automated acceptance tests with respect to Java development using Concordion and Netbeans. One of the great benefits of the described approach and tools selection is that both requirements documentation and acceptance tests are part of the project file structure. Therefore they are kept in version control repository together with the software code. Software requirements and acceptance tests can be written and maintained using integrated development environment as well as the tools for version control. Use of continuous integration tools allows that clients, business logic staff, and quality assurance staff easily access and if needed participate in the requirements changing process. Positive "side effect" is end to end regression testing that provides additional security when changes are being made.

It is important to note here that writing of requirements is not delegated and put into hands of developers. The actual core of the requirements specification is kept in simple file format (ASCII text, HTML, or Wiki) and can easily be accessed and edited by clients or business logic writers. The key is that this process requires developers' full attention and participation, which in turn results in updated requirements specification, implementation code, and acceptance tests that are fully and continuously in sync. Maintainability and traceability come "naturally" and do not represent a major headache anymore.

References

- Java: http://www.java.com

- Concordion: http://www.concordion.org

- Capers Jones, "Applied Software Measurement", Third Edition, McGraw Hill, 2008

- Steve Freeman and Nat Pryce, "Growing Object-Oriented Software", Addison-Wesley Professional, 2010.

- Dale H. Emery, "Writing Maintainable Automated Acceptance Tests", presented at Agile Testing Workshop, Agile Development Practices, Orlando, Florida, November 2009.

- Robert C. Martin, "UML for Java(tm) Programmers", Prentice Hall, 2003

- Kent Beck, "Test Driven Development: By Example", Addison-Wesley Professional, 2002.

- Michael Feathers, "Working Effectively with Legacy Code", First Edition, Prentice Hall, 2004.

- Robert C. Martin, "Clean Code", First Edition, Prentie Hall, 2008.

- Fitnesse: http://www.fitnesse.org

- Netbeans: http://www.netbeans.org

- Eclipse: http://www.eclipse.org

- Subversion: http://subversion.apache.org/

- Selenium: https://www.selenium.dev/

- Abbot: http://abbot.sourceforge.net

- Alistair Cockburn, "Writing Effective Use Cases", Addison Wesley, 2000.

- Kulak and Guiney, "Use Cases - Requirements in Context", Second Edition, Pearson Education, 2003.

Related Articles

Acceptance Test Driven Development (ATDD) Explained

Responsibility Driven Design with Mock Objects

Related Resources

Requirements Management Portal

Java Tutorials & Videos Directory

Click here to view the complete list of archived articles

This article was originally published in the Spring 2011 issue of Methods & Tools

|

Methods & Tools Software Testing Magazine The Scrum Expert |