|

Software Development Magazine - Project Management, Programming, Software Testing |

|

Scrum Expert - Articles, tools, videos, news and other resources on Agile, Scrum and Kanban |

Continuous Delivery Using Build Pipelines With Jenkins and Ant

James Betteley, https://devopsnet.com/

My idea of a good build system is one which will give me fast, concise, relevant feedback, but I also want it to produce a proper finished article when I've checked in my code. I'd like every check-in to result in a potential release candidate. Why? Well, why not?

I used to employ a system where formal release candidates were produced separately to my check-in builds (also known as "snapshot" builds). This encouraged people to treat snapshot builds as second rate. The main focus was on the release builds. However, if every build is a potential release build, then the focus on each build is increased. Consequently, if every build could be a potential release candidate, then I need to make sure every build goes through the most rigorous testing possible, and I would like to see a comprehensive report on the stability and design of the build before it gets released. I would also like to do all of this automatically, and involve as little (preferably none at all) human intervention as possible.

This presents us with a problem: we want instant feedback on check-in builds, and to have full static analysis performed on them and yet we still want every check-in build to undergo a full suite of testing, be packaged correctly AND be deployed to our test environments. Clearly this will take a lot longer than I'm prepared to wait! The solution to this problem is to break the build process down into smaller sections, and run them in parallel if necessary.

Pipelines to the Rescue!

The concept of build pipelines has been around for a few years (Sam Newman is allegedly credited with first documenting the idea back in early 2005). So it's nothing new, but it's not yet standard practice, which is a pity because I think it has some wonderful advantages. The concept is simple: the build as a whole is broken down into sections, such as the unit test, acceptance test, packaging, reporting and deployment phases. The pipeline phases can be executed in series or parallel, and if one phase is successful, it automatically moves on to the next phase (hence the relevance of the name "pipeline").

A good build pipeline will have a relatively high degree of parallelization. That is to say, the pipeline tasks won't simply all run in series - otherwise you're doing little more than daisy-chaining together a collection of build jobs, and the value of the system is greatly reduced.

In the example above, our pipeline is just a series of individual tasks. This might be sufficient for small, quick-running builds and deployments. However, if this was a large project and our unit and acceptance tests were very slow running, and our deployment steps took a long time to complete, then this pipeline may take hours to complete. Chances are you will have finished work for the day and gone home before the final stage executed! This is far from ideal, obviously, because if there's a problem with any part of my pipeline, you want to know about it as soon as possible so that you can fix it.

A good practice is to parallelize the tasks where possible. Acceptance tests, unit-test coverage and static analysis can often be performed in parallel. My own personal preference is to run unit-tests as a first step, and if they pass then run as many other tasks as possible in parallel. This assumes that the unit tests take less than 3 minutes to complete. If they take longer than 5 minutes I would recommend running the unit tests and acceptance tests in parallel on check-in.

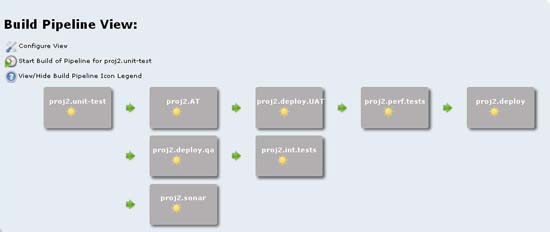

The example below shows a pipeline with parallelization:

In this example we're executing the Acceptance Tests (AT) at the same time as running the build reports and deploying our project to our QA/integration test environment. In the next stage we're executing integration tests while simultaneously deploying our project to the UAT environment. This will then kick off the performance tests.

Continuous Delivery

Continuous delivery has also been around for a while. It's basically the logical evolution of Continuous Integration. The practice of Continuous Integration covers many of the fundamentals of Continuous Delivery, for instance, the concepts of unit testing, static analysis, "failing fast" and automated testing are core to Continuous Integration. Continuous Delivery simply builds on this foundation and extends it more into the domain of deployment and project delivery. Continuous Delivery, while not a new concept, has enjoyed a recent increase in exposure, thanks largely to the publication of Jez Humble and David Farley's excellent book "Continuous Delivery". Again the concept is simple, roughly speaking it means that every build gets made available for deployment to production if it passes all the quality gates along the way. Continuous Delivery is sometimes confused with Continuous Deployment. Both follow the same basic principle, the main difference is that with Continuous Deployment it is implied that each and every successful build will be deployed to production, whereas with continuous delivery it is implied that each successful build will be made available for deployment to production. The decision of whether or not to actually deploy the build to the production environment is entirely up to you.

Continuous Delivery using Build Pipelines

You can have continuous delivery without using build pipelines, and you can use build pipelines without doing continuous delivery, but the fact is they seem made for each other. Here's my example framework for a continuous delivery system using build pipelines.

I check some code in to source control - this triggers some unit tests. If these pass it notifies me, and automatically triggers my acceptance tests AND produces my code-coverage and static analysis report at the same time. If the acceptance tests all pass my system will trigger the deployment of my project to an integration environment and then invoke my integration test suite AND a regression test suite. If these pass they will trigger another deployment, this time to UAT and a performance test environment, where performance tests are kicked off. If these all pass, my system will then automatically promote my project to my release repository and send out an alert, including test results and release notes.

So, in a nutshell, my "template" pipeline will consist of the following stages:

- Unit-tests

- Acceptance tests

- Code coverage and static analysis

- Deployment to integration environment

- Integration tests

- Scenario/regression tests

- Deployments to UAT and Performance test environment

- More scenario/regression tests

- Performance tests

- Alerts, reports and Release Notes sent out

- Deployment to release repository

Introducing the Tools

Thankfully, implementing continuous delivery doesn't require any special tools outside of the usual toolset you'd find in a normal Continuous Integration system. It's true to say that some tools and applications lend themselves to this system better than others, but I'll demonstrate that it can be achieved with the most common/popular tools out there.

Who is this Jenkins person?

Jenkins is an open-source Continuous Integration application, like Hudson, CruiseControl and many others (it's basically Hudson, or was Hudson, but isn't Hudson any more. It's a trifle confusing*, but it's not important right now!). So, what is Jenkins? Well, as a CI server, it's basically a glorified scheduler, a cron job if you like, with a swish front end. Ok, so it's a very swish front end, but my point is that your CI server isn't usually very complicated, in a nutshell it just executes the build scripts whenever there's a trigger. There's a more important aspect than just this though, and that's the fact that Jenkins has a build pipelines plugin, which was written recently by Centrum Systems. This pipelines plugin gives us exactly what we want, a way of breaking down our builds into smaller loops, and running stages in parallel.

Ant

Ant has been possibly the most popular build scripting language for the last few years. It's been around for a long while, and its success lies in its simplicity. Ant is an XML based scripting language tailored specifically for software build related tasks (specifically Java. Nant is the .Net version of Ant and is almost identical).

Sonar

Sonar is a quality measurement and reporting tool, which produces metrics on build quality such as unit test coverage (using Cobertura) and static analysis tools (Findbugs, PMD and Checkstyle). I like to use Sonar as it provides a very readable report and contains a great deal of useful information all in one place.

Setting up the Tools

Installing Jenkins is incredibly simple. There's a debian package for Operating Systems such as ubuntu, so you can install it using apt-get. For Redhat users there is a rpm, so you can install via yum.

Alternatively, if you're already running Tomcat v5 or above, you can simply deploy the jenkins.war to your tomcat container.

Yet another alternative, and probably the simplest way to quickly get up and running with Jenkins is to download the war and execute: java -jar jenkins.war

This will run Jenkins through its own Winstone servlet container.

You can also use this method for installing Jenkins on Windows, and then, once it's up and running, you can go to "manage Jenkins" and click on the option to install Jenkins as a Windows Service! There's also a Windows installer, which you can download from the Jenkins website

Ant is also fairly simple to install, however, you'll need the java jdk installed as a pre-requisite. To install ant itself you just need to download and extract the tar, and then create the environment variable ANT_HOME (point this to the directory you unzipped Ant into). Then add ${ANT_HOME}/bin (or %ANT_HOME%/bin if you're on Windows) to your PATH, and that's about it.

Configuring Jenkins

One of the best things about Jenkins is the way it uses plugins, and how simple it is to get them up and running. The "Manage Jenkins" page has a "Manage Plugins" link on it, which takes you a list of all the available plugins for your Jenkins installation.

To install the build pipeline plugin, simply put a tick in the checkbox next to "build pipeline plugin" (it's 2/3 of the way down on the list) and click "install". It's as simple as that.

The Project

The project I'm going to create for the purpose of this example is going to be a very simple java web application. I'm going to have a unit test and an acceptance test stage. The build system will be written in Ant and it will compile the project and run the tests, and also deploy the build to a tomcat server. Sonar will be used for producing the reports such as test coverage and static analysis.

The Pipelines

For the sake of simplicity, I've only created 6 pipeline sections, these are:

- Unit test phase

- Acceptance test phase

- Deploy to test phase

- Integration test phase

- Sonar report phase

- Deploy to UAT phase

The successful completion of the unit tests will initiate the acceptance tests. Once these complete, 2 pipeline stages are triggered:

- Deployment to a test server

and

- Production of Sonar reports.

Once the deployment to the test server has completed, the integration test pipeline phase will start. If these pass, we'll deploy our application to our UAT environment.

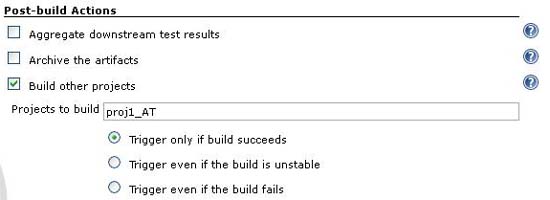

To create a pipeline in Jenkins we first have to create the build jobs. Each pipeline section represents 1 build job, which in this case runs just 1 ant task each. You have to then tell each build job about the downstream build which is must trigger, using the "build other projects" option:

Obviously you only want each pipeline section to do the single task it's designed to do, i.e. I want the unit test section to run just the unit tests, and not the whole build. You can easily do this by targeting the exact section(s) of the build file that you want to run. For instance, in my acceptance test stage, I only want to run my acceptance tests. There's no need to do a clean, or recompile my source code, but I do need to compile my acceptance tests and execute them, so I choose the targets "compile_ATs" and "run_ATs" which I have written in my ant script. The build job configuration page allows me to specify which targets to call:

Once the 6 build jobs are created, we need to make a new view, so that we can start to visualise this as a pipeline:

We now have a new pipeline! The next thing to do is kick it off and see it in action:

Oops! It looks like the deploy to QA has failed. It turns out to be an error in my deploy script. But what this highlights is that the sonar report is still produced in parallel with the deploy step, so we still get our build metrics! This functionality can become very useful if you have a great deal of different tests which could all be run at the same time, for instance performance tests or OS/browser-compatibility tests, which could all be running on different Operating Systems or web browsers simultaneously.

Finally, I've got my deploy scripts working so all my stages are looking green! I've also edited my pipeline view to display the results of the last 3 pipeline builds:

Alternatives

The pipelines plugin also works for Hudson, as you would expect. However, I'm not aware of such a plugin for Bamboo. Bamboo does support the concept of downstream builds, but that's really only half the story here. The pipeline "view" in Jenkins is what really brings it all together. TeamCity also supports "Build Chains" which is the same as a downstream build. It also allows you to break down your builds into several parts which can be run in series or in parallel, which is much the same, in principle, as a pipeline. However, as with Bamboo this functionality isn't encapsulated within a pipeline "view" yet.

CruiseControl, which is a very popular lightweight Continuous Integration System, also supports downstream builds, but beyond that it doesn't offer itself to supporting build pipelines.

"Go", the enterprise Continuous Integration effort from ThoughtWorks not only supports pipelines, but it was pretty much designed with them in mind. Suffice to say that it works exceedingly well, in fact, I use it every day at Caplin Systems where I work. On the downside though, the enterprise version costs money, whereas Jenkins doesn't. However, there is a "free" alternative, the Go Community Edition, which still apparently has some support for pipelines. What Go (the enterprise edition) does offer though, is a wonderful facility for managing multiple build agents (at Caplin we're running about 150 build agents) which allows us to create a build grid. It's very important that the system should support multiple build agents as this allows you to run so many parts of the pipeline in parallel. Of course, it's not too difficult to do this without the support of your CI system - that is to say, you can do a similar thing using Jenkins by explicitly instructing your scripts where to deploy your project or run your tests, but having your agents managed by the CI system is actually hugely valuable, and a lot easier in my opinion.

As far as build tools/scripts/languages are concerned, this system is largely agnostic. It really doesn't matter whether you use Ant, Nant, Gradle or Maven, they all support the functionality required to get this system up and running (namely the ability to target specific build phases). However, Maven does make hard work of this in a couple of ways - firstly because of the way Maven lifecycles work, you cannot invoke the "deploy" phase in an isolated way, it implicitly calls all the preceding phases, such as the compile and test phases. If your tests are bound to one of these phases, and they take a long time to run, then this can make your deploy seem to take a lot longer than you would expect. In this instance there's a workaround - you can skip the tests using -DskipTests, but this doesn't work for all the other phases which are implicitly called. Another drawback with maven is the way it uses snapshot and release builds. Ultimately we want to create a release build, but at the point of check-in we want a snapshot build. This suggests that at some point in the pipeline we're going to have to recompile in "release mode", which in my book is a bad thing, because it means we have to run ALL of the tests again. As usual, there is a workaround! Axel Fontaine, a software development consultant in Munich, has written a great blog on how to create a release in Maven2 without using the release plugin. Alternatively, and this is my preferred solution, you can just make sure your CI builds are not snapshots, by running the Maven release prepare command in one of your pipeline stages.

* A footnote about the Hudson/Jenkins "thing": It's a little confusing because there's still Hudson, which is owned by Oracle. The whole thing came about when there was a dispute between Oracle, the "owners" of Hudson, and Kohsuke Kawaguchi along with most of the rest of the Hudson community. The story goes that Kawaguchi moved the codebase to GitHub and Oracle didn't like that idea, and so the split started.

References

DevOps Ramblings http://jamesbetteley.wordpress.com/

Caplin Systems Tech blog http://blog.caplin.com/

Continuous Delivery Book http://martinfowler.com/books.html#continuousDelivery

Dave Farley http://www.davefarley.net/

Jez Humble http://continuousdelivery.com/

Maven http://maven.apache.org/

Sonar http://www.sonarsource.org/

Jenkins CI http://jenkins-ci.org/

Hudson http://hudson-ci.org/

Centrum Systems http://www.centrumsystems.com.au/

GO CI from Thoughtworks http://www.thoughtworks-studios.com/go-agile-release-management

Bamboo http://www.atlassian.com/software/bamboo/

Nant http://nant.sourceforge.net/

Gradle http://www.gradle.org/

Sam Newman's blog http://www.magpiebrain.com/

Cruise Control http://cruisecontrol.sourceforge.net/

Axel Fontaine's blog http://www.axelfontaine.com/2011/01/maven-releases-on-steroids-adios.html

Related Articles

Continuous Integration: the Cornerstone of a Great Shop

Build Patterns to Boost your Continuous Integration

Related Continuous Delivery Resources

Agile Software Development Portal

Click here to view the complete list of archived articles

This article was originally published in the Summer 2011 issue of Methods & Tools

|

Methods & Tools Software Testing Magazine The Scrum Expert |